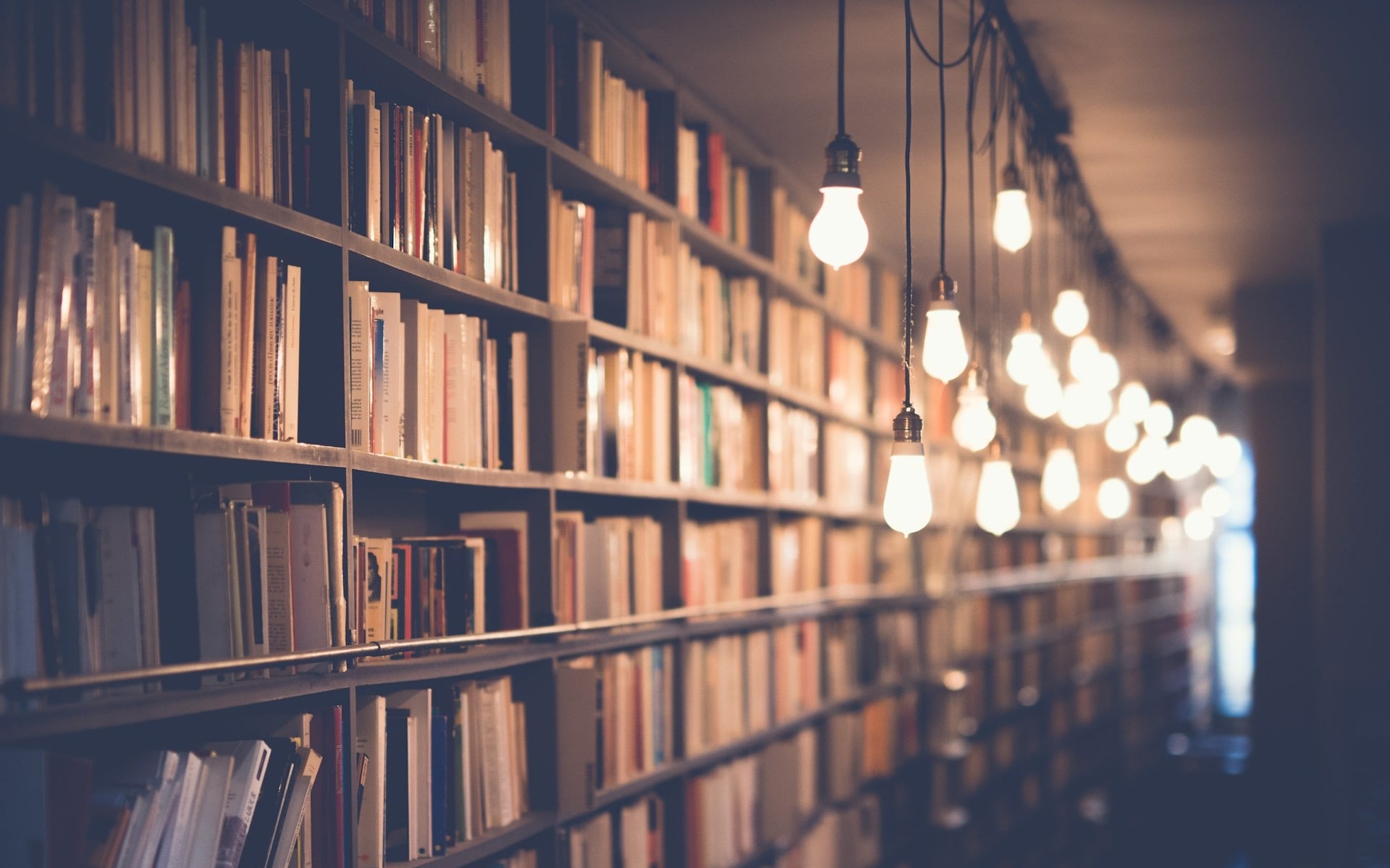

Photo: Koshiro K / Shutterstock.com

Google Assistant, Siri, Alexa, Cortana and even Bixby — just about everyone who’s used a smartphone or has browsed the internet will know these names. These popular AI assistants have donned our smartphones, smart speakers and other devices for a while now, assisting us in basic queries and sometimes even complex questions, always being a prompt away.

But now, a new generation of AI assistants is coming up — one that’s far more intelligent and verbose in its answering than what we’re used to now. Many of you might have already tested out ChatGPT or one of the hundreds of tools based on OpenAI’s revolutionary GPT lineup of Large Language Models (LLMs).

If not, you might have played around with Google’s Bard or Microsoft’s Bing Chat. Both Google and Microsoft are integrating their respective versions of these AI chatbots into their search engines and making them more and more accessible by the day.

So far, these assistants have been kept separate from devices — accessible on apps and websites. Useable, yes, but not as well-integrated as the default AI assistant on your phone. However, with ChatGPT now getting the ability to see, hear and talk, that might change rather quickly.

Also read: OpenAI faces lawsuit for alleged data theft in training ChatGPT

What new features does ChatGPT get?

On September 25, OpenAI unveiled new voice and image recognition capabilities in ChatGPT. An update to the chatbot’s Android and iOS apps allows users to speak their queries instead of typing them and lets the chatbot respond with its synthesised voice. ChatGPT can also peek into your life now. Just upload a photo or capture one inside the ChatGPT app; the chatbot can provide additional context about what’s in the image.

A few days later, on September 27, OpenAI removed the chatbot’s major restriction, allowing it to access the internet in real-time and provide direct links to its sources. While this feature is limited to ChatGPT Plus and Enterprise users, this means that for paying customers, the chatbot isn’t just limited to information before 2021 — increasing its utility significantly.

ChatGPT’s text-to-speech model was reportedly built in-house and can generate human-like audio from text by listening to a few seconds of a sample voice, courtesy of the OpenAI Whister model. It can also speak in different tones and styles, as OpenAI shows off in its announcement.

As for the chatbot’s vision, capabilities have been around since GPT-4 launched but weren’t discussed much, largely because OpenAI didn’t allow users to test the feature. With the latest update, however, users can ask ChatGPT to analyse images and fill in the gaps as and when required.

Most of these features are limited to Plus and Enterprise users, meaning non-paying users won’t be able to use them, at least for now. Regardless, they make ChatGPT a much more powerful tool than it already is. It is helpful in many more situations as it has access to the internet in real-time and allows the user to provide different kinds of input.

How do the new features change the ChatGPT user experience?

Well, for starters, it’ll spike the user base once again as more old and new users would want to try out the new features. You might not see much change in your user experience if you’re using the free version. Still, for paying users, the ability to talk to the chatbot instead of having to type every query can be helpful, especially if you’re using ChatGPT to research.

The fact that the chatbot can now directly link to its sources and access the latest information also makes it a much more powerful research tool, among other things. This will save a lot of time vetting the chatbot’s information as you now have direct access to the source of the information instead of having to verify it manually by snooping around the internet yourself.

As for the image analysis features, they might seem a lot like Google Lens on the surface — a pretty darn good service already in existence, but the fact that you can chat back and forth with ChatGPT and ask for more information instead of being spammed with a multitude of links about what the algorithm thinks there is in the image is a game changer.

Can ChatGPT take over AI assistants’ place?

As alluring as that might sound, ChatGPT is still a while away from replacing Google Assistant or Siri on your phone.

Google is way ahead of the competition regarding smartphone AI assistants, having developed Google Assistant constantly and adding new features since its 2016 launch. Additionally, its Bard chatbot and PaLM 2 LLM can hold their own against OpenAI’s ChatGPT and GPT-4.

It’s hard to compare the two rival’s underlying technologies here as both models were built and tested differently.

GPT-4 and PaLM 2 are comparable, and Google is well aware of the competition.

Google is already integrating AI features in over 25 of its services. On October 4, Google announced that it’s integrating Assistant and Bard. Users now get Bard’s generative and reasoning capabilities packaged with Assistant’s omnipresent convenience.

You can use voice, text or images to interact with this new version of Assistant, much like the new set of features that ChatGPT got, and it’s available on Android and iOS.

However, it’s best not to be too optimistic. For starters, the feature hasn’t launched yet; it’s “coming soon”, so we can’t speak to its effectiveness. Considering the pitfalls Bard has been through so far, it’d be wise to wait for a public release to see if the Assistant and Bard combination can rival ChatGPT’s implementation.

As Google and OpenAI battle for AI assistant superiority, rivals are also hard at work. Amazon is reportedly building its AI-powered conversational chatbot, and while Apple’s Siri isn’t always the best AI assistant, we might see its capabilities being upgraded soon as well. Chinese company Baidu also claimed to have outperformed ChatGPT with its chatbot Ernie earlier this June.

Assuming OpenAI is headed for the top spot for AI assistants, it must account for the massive user bases that mainstream AI assistants have gathered over the years. At CES 2020, Google announced that Google Assistant has over 500 million monthly active users worldwide.

Apple’s Siri surprisingly beats Google Assistant regarding monthly active users with an estimated 600 million monthly active users, likely due to it being closely knit into the Apple walled garden. Siri might be behind Google Assistant regarding sheer features and functionality, but it holds an equal market share at 36% with Google Assistant as of 2020.

Despite selling over 100 million supported devices, Amazon’s Alexa still only has about 25% of the market share when it comes to digital assistants and has something to the tune of 200 million monthly active users. However, userbase numbers might not be much of a problem for ChatGPT, whose userbase has grown to nearly 180 million users in August 2023 — less than a year since its November 2022 launch.

ChatGPT remains the fastest-growing consumer software having hit over 100 million active users in January 2023.

The final hurdle that ChatGPT needs to jump if it’s looking to become the go-to AI assistant is device integration. No matter how good it gets, it’s still an app that users must open and interact with manually. The convenience of saying “Hey Google”, “Hey Siri”, or just pressing a button to summon your assistant shouldn’t be undermined.

So yeah, for now, you’ll continue seeing the familiar AI assistants we’ve used over the years to help you with everyday tasks. However, with Google’s upcoming Bard integration, rivals pushing to catch up and OpenAI constantly adding features to ChatGPT, the generative AI and AI assistant spaces are getting more competitive than ever.

Also read: Google PaLM2 LLM comes to Search, Workspace and Bard