Photo: Koshiro K/Shutterstock.com

OpenAI has announced GPT-4 — the next-generation AI that succeeds GPT-3.5 — which will power ChatGPT and Bing, among other services that leverage OpenAI’s API. The “large multimodal model” accepts image and text inputs, allowing it to process complex inputs.

Following months of rumours and weeks of speculations, GPT-4’s text input capability is being released via ChatGPT for the general public and OpenAI API, which will require interested developers to enter the waitlist. People can try GPT-4 via the $20 ChatGPT Plus subscription.

Currently, OpenAI has partnered with Be My Eyes to test and prepare GPT-4’s image processing capabilities and make it widely available in the future. The partner company has released a GPT-4 based Virtual Volunteer tool that includes a dynamic image-to-text generator where people can send images and receive “instantaneous identification, interpretation and conversational visual assistance for a wide variety of tasks”. The Virtual Volunteer waitlist is open for iOS app and is coming soon for Android.

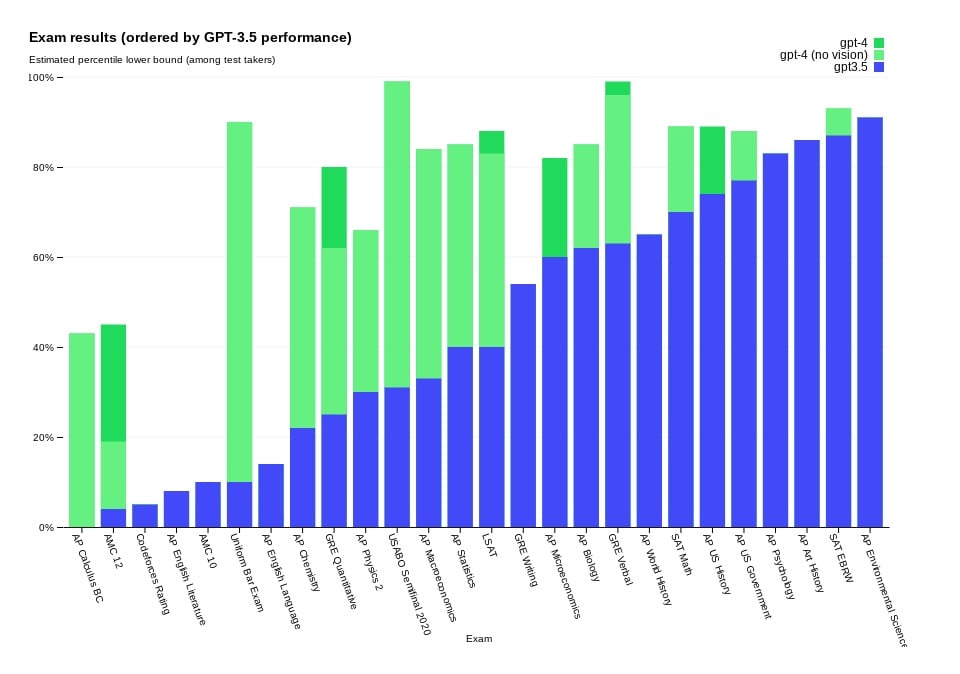

OpenAI claims that GPT-4 passes a simulated exam (including Uniform Bar Exam, LSAT, and SAT Math) with a score in the top 10% of test takers, which is a huge step up against GPT-3.5’s score in the bottom 10%. In their tests, GPT-4 also outperforms existing LLMs, alongside most state-of-the-art (SOTA) models.

However, the company still believes that GPT-4 is far from perfect. Talking about GPT-4’s limitations, OpenAI said, “Despite its capabilities, GPT-4 has similar limitations as earlier GPT models. Most importantly, it still is not fully reliable (it “hallucinates” facts and makes reasoning errors). While still a real issue, GPT-4 significantly reduces hallucinations relative to previous models”.

Still, the company has already partnered with Duolingo, Stripe and Khan Academy, among several others to integrate GPT-4 into their products. OpenAI has also open-sourced its Evals framework for automated performance evaluation of AI models.

While OpenAI said that GPT-4 has been through half a year of safety training, OpenAI CEO Sam Altman tweeted that GPT-4 is still flawed and it “seems more impressive on first use than it does after you spend more time with it”.

“We’ve decreased the model’s tendency to respond to requests for disallowed content by 82% compared to GPT-3.5, and GPT-4 responds to sensitive requests (e.g., medical advice and self-harm) in accordance with our policies 29% more often,” OpenAI announced.

In the News: India proposes crackdown on phone bloatware and OS screening