Two AI engines named DeepNash and Cicero have learned to play board games Stratego and Diplomacy from scratch, with the former claiming human-expert level in the game. DeepNash comes from DeepMind, while Cicero is from Meta and CSAIL.

Going up against AI opponents in a game is nothing new, but at least so far, board games have been difficult for computers to conquer. The only notable exception is chess here — where bots surpassed humans some time ago.

That said, both Stratego and Diplomacy are based on imperfect information. Both games have crucial information hidden until up to a specific encounter, making the game unpredictable and difficult for a computer to master. These games require guessing what the opponent thinks and what they think you’re thinking. Turns out, computers aren’t great at bluffing until these two AI agents came along.

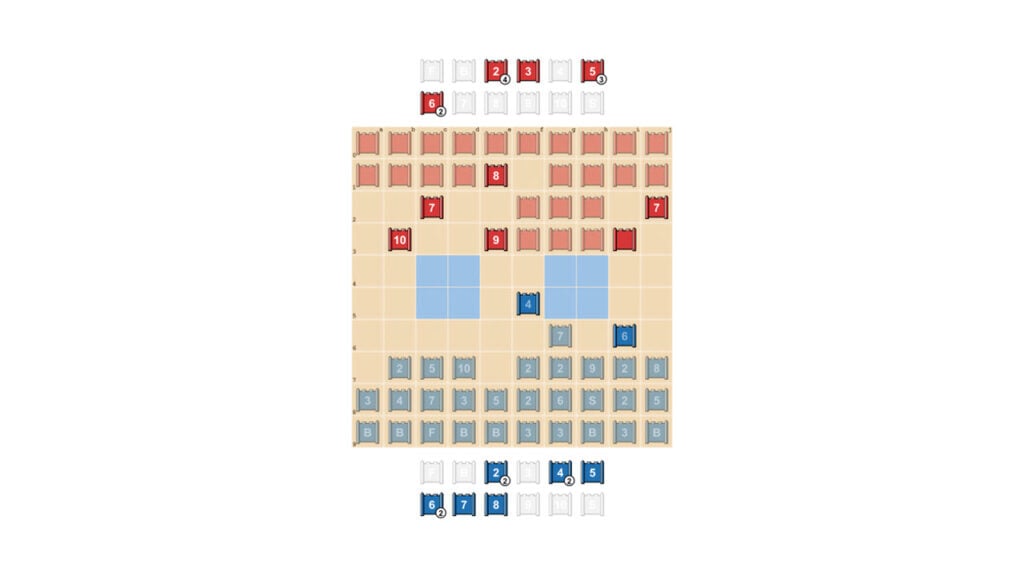

DeepNash taught itself how to play Stratego from scratch by playing against itself enough times. DeepMind claims that DeepNash is good enough to beat other Stratego AIs almost every time and a human player 84% of the time. It’s based on a new algorithm called Regularised Nash Dynamics and focuses more on making moves that can’t be exploited or predicted instead of outsmarting its opponents.

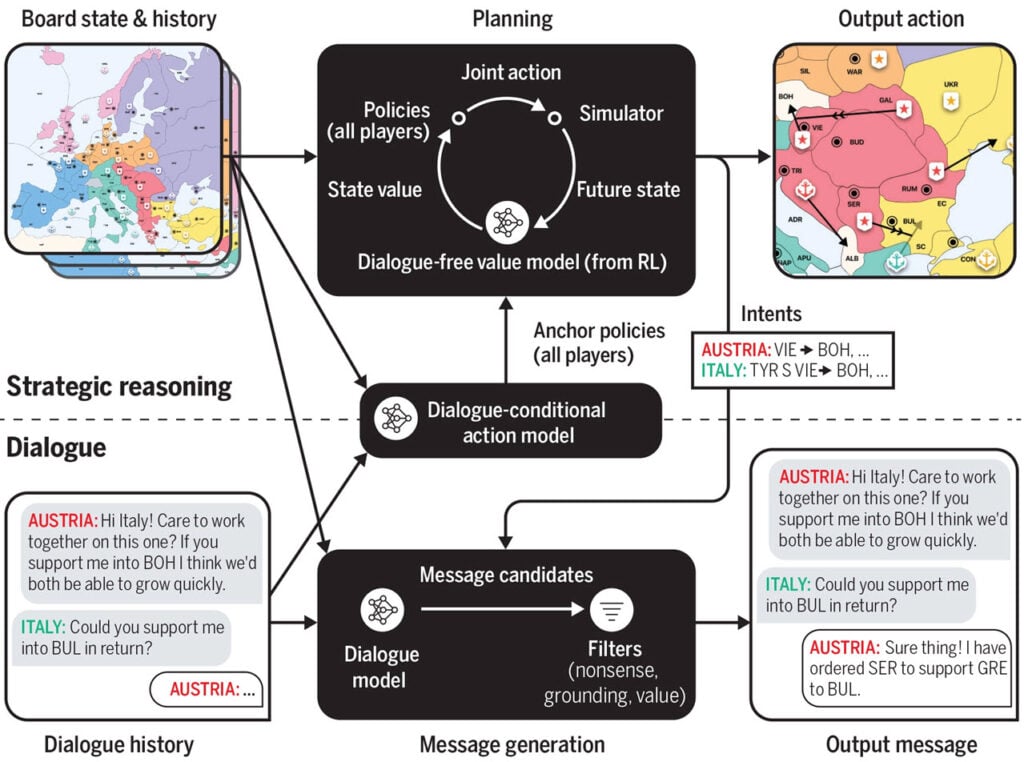

On the other hand, Cicero works through a multi-step process by combining language models with strategic reasoning to come up with the best move possible and leave the rest to luck.

- Step 1: Use the board state to understand the ongoing dialogue between the player and the AI to make an initial prediction.

- Step 2: Refine initial prediction using planning and then use them to form an intention for the AI itself and any partners.

- Step 3: General output messages based on the board state, dialogue and intent.

- Step 4: Filter opponent messages to maximise value and ensure consistency of intent.

If that sounds like a lot, that’s because Diplomacy is a notoriously hard game for even humans. The game often requires several devious tactics, false promises, overall backstabbing and a certain ability to communicate with other players, all extremely hard things for an AI to handle.

In a test on WebDiplomacy.net, the AI ranked second in the league with 19 players, generally outplaying its opponents. That said, it still has a few slip-ups, including losing track of conversations with others or making other mistakes that a human player likely won’t.

In the News: Leaked OEM certificates used to sign malicious Android apps