Opera has introduced a new feature allowing users to download 150 local Large Language Model (LLM) variants from around 50 model families directly within its browser through the AI Feature Drops program.

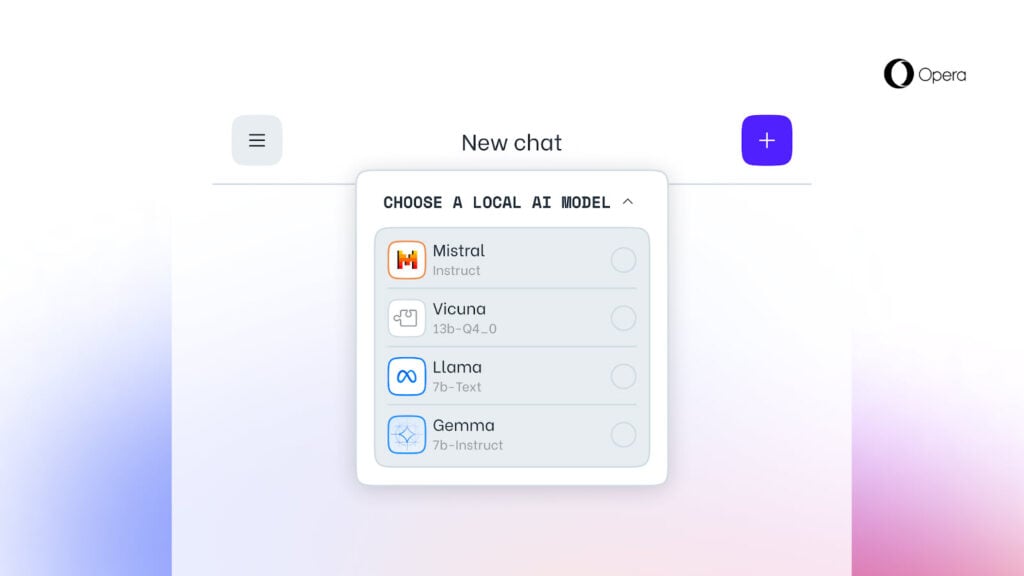

The newly integrated local LLM variants include popular models such as Meta’s Llama, Vicuna, Gemma from Google, and Mixtral from Mistral AI. These variants are now accessible in Opera One’s developer stream, offering users enhanced control and confidentiality over their AI interactions.

This advancement represents a unique integration of AI within web browsers. By incorporating local LLMs, Opera empowers users to utilise AI capabilities while safeguarding the data.

Users can easily access these local LLMs within Opera One’s developer stream and select their preferred model for prompt processing, considering individual preferences and performance needs. However, it is important to note that local LLMs may require varying storage capacities (2-10 GB) and processing speeds based on users’s hardware.

“Using local large language models means users’ data is kept locally, on their device, allowing them to use AI without the need to send information to a server. We are testing this new set of local LLMs in Opera One’s developer stream as part of our AI Feature Drops Program, which allows you to test early, often experimental versions of our AI feature set,” noted Julia Szyndzielorz, Senior Public Relations Manager at Opera.

If the users’s processor speed is slow, they may feel some delay in getting the answers compared to a server-based LLM.

To test the feature, users must update Opera to the latest version, open Aria Chat, and click on Choose local mode. Then, they must search for and download the version they like. After finishing the download, they must click the menu button, select Choose local mode again, and then select the model they have downloaded to start the chat.

This initiative also paves the way for potential future use cases, such as browsers leveraging AI solutions based on users’ historical input while preserving data locally, signalling a personalised browsing experience.

In the News: TSMC and UMC halt production following Taiwan earthquake