On Monday evening, Facebook, Instagram and Whatsapp went down globally for about six to seven hours. According to the company, configuration changes on backbone routers that coordinate network traffic between Facebook data centres was causing issues.

In an announcement by Facebook on Monday. they apologised, stating that the services are now back online and support teams are working to restore the entire operation as soon as possible.

In layman terms, the issue was caused by Facebook and its related services’ DNS names not being resolved and infrastructure IP’s being unreachable. It would be the equivalent of someone pulling all cables from the servers from all their data centres all at once, as explained by Cloudflare.

In the News: ‘Grifthorse’ Android malware runs over 10 million victims using 200+ apps

Why is Facebook, Instagram and WhatsApp down?

Initially, at 16:51 UTC, Cloudflare opened an internal incident entitled “Facebook DNS lookup returning SERVFAIL” as they were afraid that something was wrong with their 1.1.1.1 DNS resolver. However, upon deeper investigation, the company realised the issue was more serious.

As we’ve mentioned before, the root cause of the issue was a faulty configuration change in the backbone routers that coordinated network traffic between Facebook’s data centres. This also impacted a lot of internal tools that the company uses, severely impacting their ability to diagnose and resolve the issue.

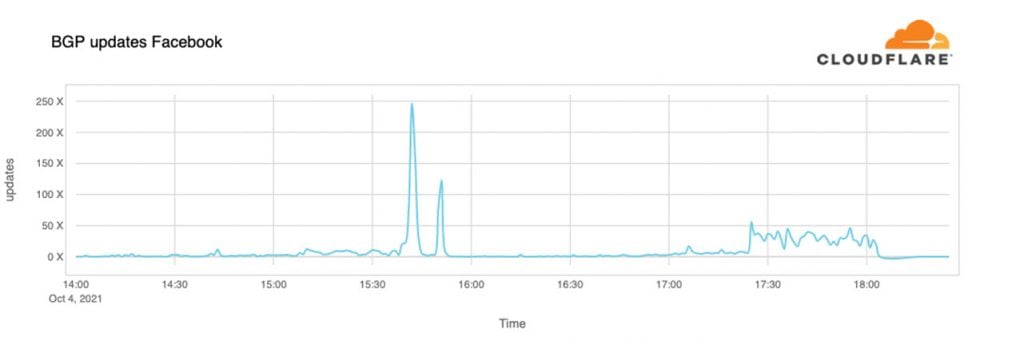

At around 15:40 UTC on Monday, Cloudflare noted a peak of routing changes from Facebook; that’s when the issue started. As Facebook pushed a faulty BGP (Border Gateway Protocol) update for their backbone routers, their services began disappearing from the internet.

At 16:58 UTC, Facebook had stopped announcing their DNS prefix routes. This meant that at least Facebook’s DNS servers were unavailable, rendering Cloudflare’s 1.1.1.1 DNS resolver not being able to respond to any requests leading to Instagram or Facebook.

At the same time, other Facebook IP addresses were routed but couldn’t be reached because of their DNS being down — the entire network was left effectively unavailable. As a direct consequence of the faulty update, Facebook’s DNS resolvers worldwide stopped responding, which also caused a knock-off effect on Cloudflare.

As Facebook stopped announcing their DNS prefixes through BGP, Cloudflare and everyone else’s DNS resolvers had no way to connect to them. This caused SERVFAIL responses and 1.1.1.1, 8.8.8.8 and other major DNS resolvers. This also caused a wave of additional DNS traffic flow as apps won’t take an error for an answer and start retrying. Human behaviour also factored in, and more and more people tried to connect to these services.

As people started looking for alternatives and ways to discuss what was going on, active services like Signal and Twitter saw a sudden rise and DNS activity.

At around 21:00 UTC, Cloudflare noted new BGP activity from Facebook, which peaked at 21:17 UTC. This was Facebook attempting to fix the faulty configuration changes. Eventually, Facebook’s DNS resolver returned at around 2120 UTC.

Their services will still take some time to become fully operational, but as of 21:28 UTC, Facebook seems to be online and connected to the global internet with its DNS functional again.

In the News: Clubhouse brings universal search, clips, replay and spatial audio to its app