Microsoft researchers have announced a new AI model called VALL-E that can replicate a person’s voice given a three-second sample. The AI goes one step further and can even attempt to maintain the person’s emotional tone when synthesising their speech.

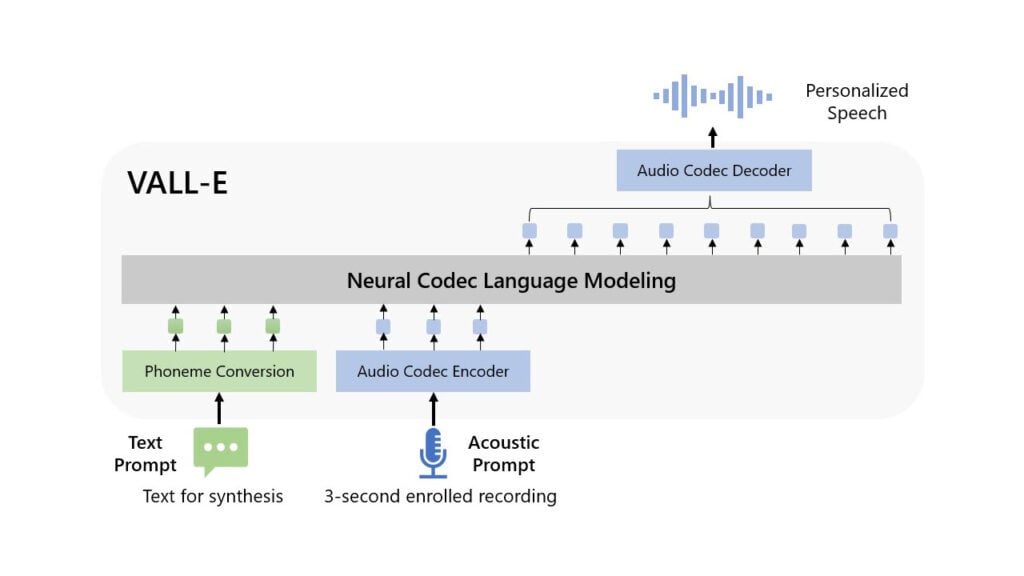

The model is based on Meta’s EnCodec technology, announced in October 2022. Instead of conventional text-to-speech methods that manipulate audio waveforms to synthesise audio, VALL-E breaks down how a person sounds by making individual audio codecs or ‘tokens’ from text and acoustic prompts.

This is done thanks to EnCodec. VALL-E then uses training data from the LibriLight audio library (also collected by Meta) to match the sound to how it thinks the audio will sound if something else is spoken outside of the three-second voice prompt.

The result is an AI model that can sometimes quite accurately predict how a person will sound and, in any case, sounds better than your regular robotic text-to-speech sounds.

As for the training data, Meta’s LibriLight library contains 60,000 hours of English speech from more than 7,000 speakers, mostly taken from audiobooks publicly available on LibriVox. For the model to generate a good result, the prompt’s voice should closely resemble one in the training set.

While this might seem like a rather limited use case, the model’s creators think it can be used for high-quality text-to-speech applications, at least in English for the time being or speech editing from a text manuscript, as shown on the model’s demo site.

Another possible use case is the audio content generation when paired with other AI models like GPT-3, which Microsoft is already trying to integrate into its Office suite and search engine.

Of course, the speech synthesis abilities of VALL-E will also pose a security risk as well, similar to what we’ve seen with deepfakes in the past. As companies figure out the right uses cases for these models, we might see complete bans on VALL-E, just like what its text-based counterpart ChatGPT faced.

In the News: YouTube Shorts creators will start earning revenue starting Feb 1