I’m sure at some point in your life you’ve heard someone going on about dedicated graphics cards and how they are so much better than integrated ones. Especially when buying a laptop, this is the first question that pops up into many people’s minds.

In this article, we bring you the differences between dedicated and integrated GPUs.

What are Integrated GPUs?

Integrated GPUs don’t have their own VRAM. Instead, they share some part of RAM and use it as VRAM.

Suppose you have a laptop with 8GB of RAM, in this case, the video card can use up to 50% of your RAM as its graphics memory. This percentage might also depend on what current task you’re performing on your computer. Heavy tasks, especially games tend to tax the RAM much more than lighter, less demanding tasks.

Not only VRAM, but integrated GPUS are also usually slower than their dedicated counterparts. They have lower vertex counts and cannot handle demanding scenarios as well as dedicated GPUs do.

Also read: Here’s how you can choose which GPU is used by a game on Windows 10

Why do we use Integrated GPUs?

The main advantage of using an integrated GPU is that it is cheaper in comparison to a dedicated GPU, which eventually means a cheaper computer/laptop.

Integrated GPUs also generate a lot less heat and use significantly less power. This allows manufacturers to build PCs with much slimmer and lighter chassis. Since integrated GPUs use a lot less power, battery life also gets a bump.

The target audience for Integrated GPUs is also quite wide. They’re basically perfect for anyone who does only everyday graphics processing tasks such as watching videos, browsing the web, 2D gaming, general word processing and so on.

These activities are not much graphics intensive so an integrated GPU suffices. However, that doesn’t mean you’ll not be able to do more graphically demanding tasks on your PC, just be ready for some extremely choppy performance.

Also read: Top 15 Steam alternatives for gamers

What are Dedicated GPUs?

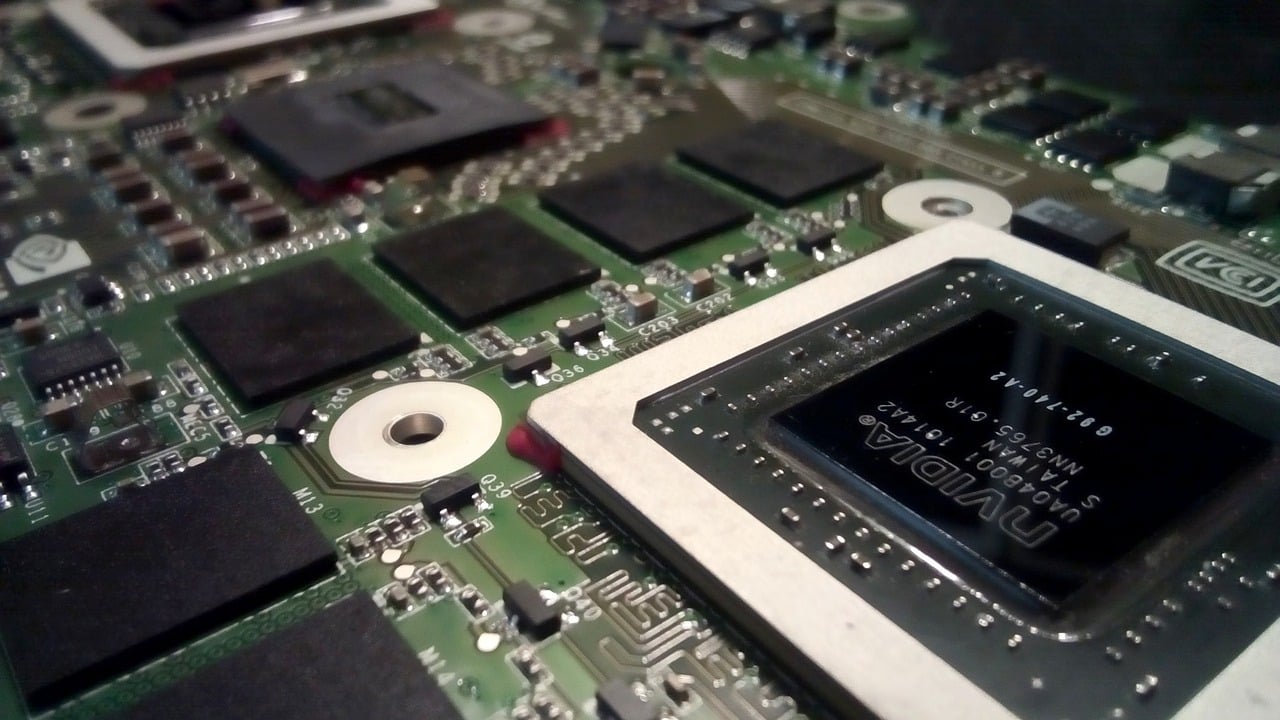

Dedicated or Discrete GPUs, as the name suggests have their own graphics memory.

For example, the laptop I’m writing this article on has 4GB of Nvidia GeForce GTX 940MX graphics card and 8GB of RAM. Since this machine is running a dedicated GPU I get to use my full 8GB of RAM and get 4GB of VRAM as well.

Dedicated GPUs are faster, more efficient at handling heavy loads. Since they don’t share their memory with the computer’s RAM, they don’t hit your system hard when running heavy graphics loads.

Also read: CS:GO: Hacks that get you banned vs hacks that help you out

Why do we use dedicated GPUs?

Dedicated GPUs are the go-to GPUs for gamers, graphic designers, content creators, streamers. Basically, any other professional who needs more graphics horsepower.

All this power comes at a cost. Dedicated GPUs tend to get hot, and if you don’t have appropriate cooling, this can turn into a problem really quick.

Not only will you experience thermal throttling on your system (lowered performance because of too much heat), there’s also a chance that prolonged heating can damage some parts. If you’re a serious gamer or use a multi-monitor setup, the problem escalates even more.

Also, these cards hog up power like anything. So, if you’re running a laptop with a dedicated GPU, don’t expect a lot of battery life. Even on PCs, they’ll need behemoth PSUs to keep them fed properly.

The price difference is also a big factor here. Dedicated GPUs are way more expensive than integrated ones. You’ll end up paying hundreds of dollars more than you would for an integrated GPU.

Laptop manufacturers have long been trying to fix these problems. Most modern laptops now have a dual GPU system. Which means there an integrated GPU handling light loads and a dedicated GPU which comes into play whenever there’s a need for more power.

Such an implementation helps balance out battery life for performance. It also allows for better thermal management.

Also read: Top 15 offline games for Android that you must try